Salesforce recently announced that it’s reversing its decision to take away its Data Recovery Service. The tool was provided as a last resort for companies on Salesforce in the event of data loss, and was retired in the summer of 2020. Salesforce gave several reasons for its initial decision: notably the small number of customers using it, and the existence of third-party tools in the ecosystem providing the same service.

The revival of this tool may be significant news to some companies, but it should make little difference to teams on Salesforce. Here’s why:

The service is limited

The Data Recovery service wasn’t designed to be a serious backup solution. It offers a fail-safe. Every team using Salesforce wants the comfort of knowing their data is protected. But when you dig into what the service provides, the offerings are less than ideal; it costs $10,000, and can take 6-8 weeks for a business to receive the data in CSV format. They must then restore the data themselves, with no guarantee that it’s up to date - Salesforce will send you data from an unspecified point of time within the previous 3 months.

The turnaround time can be particularly painful for teams. Two of the most important parameters for teams building a data disaster recovery plan are the Recovery Point Objective (RPO) and the Recovery Time Objective (RTO). The Recovery Point Objective is the period of time that can pass during a disruption before the amount of data lost exceeds the Business Continuity Plan’s threshold, while the Recovery Time Objective (RTO) is the amount of time taken to recover from that disruption. The recommended RPO is within a day, while the recommended RTO is within a few hours.

For teams using Salesforce’s Data Recovery service, the RPO is 3 months, while the RTO is the length of time taken to notice the data loss, plus 6-8 weeks for the data to be retrieved, plus the amount of time needed to recover. Compare this to Gearset, which lets you backup and recover data in a few clicks. Having data only a button-press away at all times provides invaluable confidence and stability to business continuity. It ensures that the company can be up and running again smoothly within a short amount of time, regardless of the severity of the loss.

London, UK

Agentforce World Tour London

There’s a false sense of security

Salesforce have announced that alongside the return of their recovery service, they’ll also be piloting backup tools built natively on the platform. The introduction of additional options for teams underlines how important backup is for Salesforce orgs. But there’s a serious problem with using native backup tools: having your data backup and recovery tools tied to the same infrastructure goes against basic backup best practice. If Salesforce goes down, you need to be able to access your backup data.

By having a backup solution that exists separately, you are mitigating against the risk of losing both data and backups in a single disaster. Gearset, for example, stores backup data at the AWS data center in your chosen region - either the US (US West 2) or Ireland (EU West 1). Our users know that even in a worst-case scenario like Salesforce experiencing a severe outage, their data and metadata are secure.

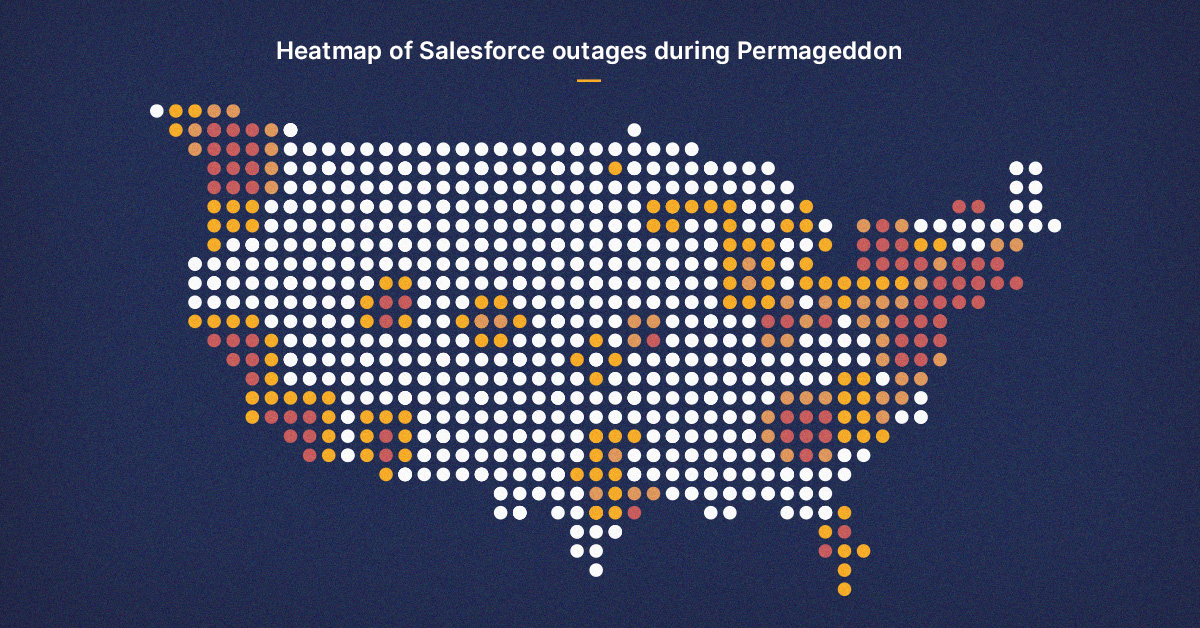

This highlights another potential issue with the Data Recovery service: Salesforce only gives you data, and not the metadata needed to fully restore data and permissions. An example of how these risks might lead to larger problems is the ‘Permageddon’ incident in 2019, when any team who had ever integrated Pardot into their Salesforce orgs found their permissions model had been corrupted. This led to Salesforce deleting all affected permissions, and admins were forced to rebuild their org’s profiles and permissions manually.

The best option is still Gearset

The best performing DevOps teams should have a comprehensive backup and recovery solution, so the re-introduction of Salesforce’s Data Recovery Service should have no effect on their processes. For a business to succeed on Salesforce, data must be secure, backed up, and retrievable. The threat of loss or corruption can come from many different sources.

Server outages can cause a variety of issues - from a smaller-scale example like the NA14 Salesforce outage in 2016, causing the deletion of several hours’ worth of data in the build-up, to the OVH Data Center Fire recently, which may lead to some business data being permanently lost.

Further, individual integrations within Salesforce can alter or delete data, with the Pardot example in 2019 showing how seemingly innocuous interactions between Salesforce and an integration can have unforeseen disastrous effects.

Finally, human error is one of the leading causes of data loss. We all make mistakes, and some of those mistakes can cause serious damage. Whether it’s a developer running a dodgy command, or an admin accidentally overwriting thousands of records, the risks are always there. Not only does Gearset offer a reactive solution to recover lost data, but we offer proactive prevention measures with our smart problem analyzer and the ability to roll back deployments.

Choosing a robust backup solution

Want to learn more about protecting your org data? Check out Gearset’s Salesforce backup solution or our free backup ebook to see how you can safeguard about Salesforce data loss.

And when you’re ready to level up your backup, book a demo to see why Gearset is the best solution for securing and restoring your data.